March 31 Roundup: Microsoft pairs GPT with Claude, Copilot Health launches, Sora's $1M-a-day collapse, Mythos cyber fears mount, states defy Trump on AI rules, and Diamandis hails the TeraFab era

Microsoft makes the boldest multi-model bet in enterprise AI by putting GPT and Claude inside the same workflow, then unveils a personal health AI. OpenAI's Sora shutdown reveals staggering losses and a blindsided Disney. Anthropic's leaked Mythos model sparks a cybersecurity panic. States push ahead with their own AI laws despite White House warnings. ChatGPT Ads quietly hit a $100M run rate. And Peter Diamandis says Elon Musk's TeraFab is the moment the machine starts building itself.

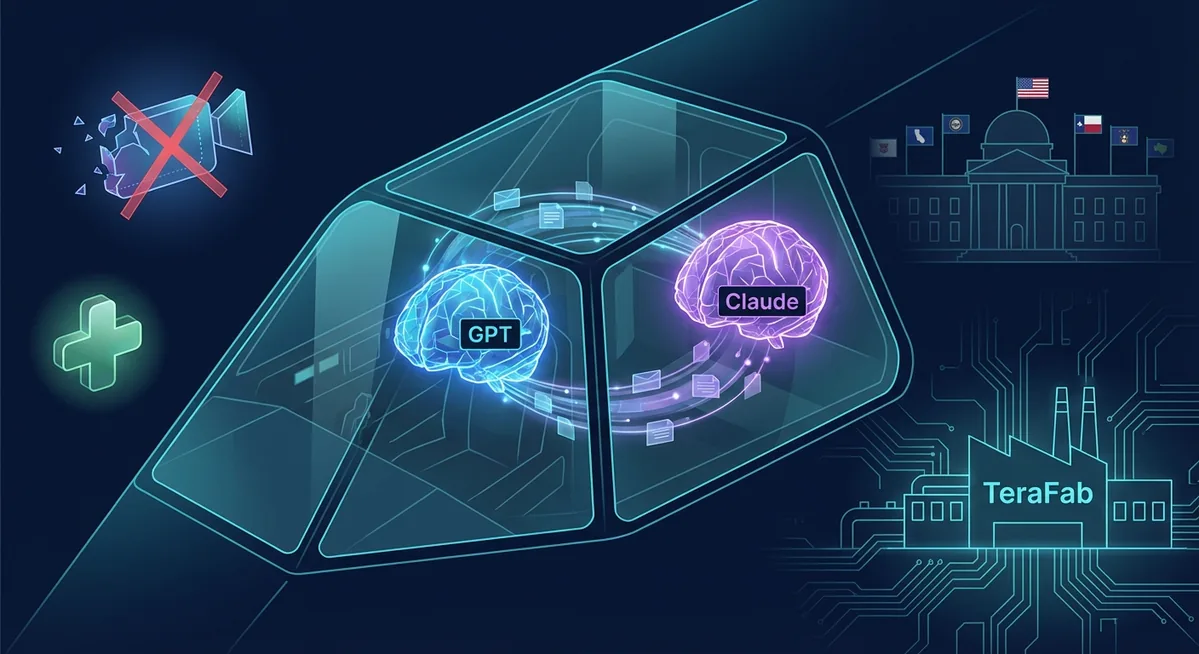

1. Microsoft Puts GPT and Claude in the Same Cockpit with Copilot Cowork

Microsoft on Monday unveiled a radical upgrade to its Copilot suite: a new "Critique" feature inside its Researcher agent that runs OpenAI's GPT and Anthropic's Claude simultaneously on every query. GPT generates the draft; Claude reviews it for accuracy and quality before the user sees it. The company says it will make the workflow bi-directional in the future, letting GPT audit Claude's output too.

The feature ships alongside "Council," which lets users compare responses from different models side by side, and the wider rollout of Copilot Cowork — Microsoft's agentic AI tool modeled on Anthropic's viral Claude Cowork product — to its "Frontier" early-access program.

"Having various different models from different vendors in Copilot is highly attractive — but we're taking this to the next level, where customers actually get the benefits of the models working together." — Nicole Herskowitz, Corporate VP of Microsoft 365 and Copilot, to Reuters

The timing is pointed. Microsoft's stock is on pace for its worst quarter since 2008, down nearly 25%, as investor patience with AI spending wears thin. Multi-model orchestration is the company's answer: don't bet on one model — make them all compete inside the same product.

This is potentially the most important enterprise AI announcement of Q1. Multi-model orchestration — where models check each other's work in real time — is the fastest path to reducing hallucinations and building trust in agentic workflows. It also makes Microsoft the neutral platform play: they don't care who wins the model race as long as the arena is Copilot. For businesses evaluating AI strategy, the question shifts from "which model?" to "which orchestration layer?" That's a fundamentally different buying decision, and it favors the platform with the deepest enterprise integrations.

2. Microsoft Launches Copilot Health — Your AI Doctor's Assistant

In a separate announcement, Microsoft unveiled Copilot Health, a dedicated space within Copilot that aggregates health records, wearable data from over 50 devices (Apple Health, Oura, Fitbit), and visit summaries from more than 50,000 U.S. hospitals via HealthEx — then applies AI intelligence to deliver personalized health insights.

The product doesn't replace physicians. It helps patients arrive prepared, with the right questions and relevant context pulled from their full medical history. Copilot Health also connects to real-time U.S. provider directories, letting users search by specialty, location, languages, and insurance coverage.

"Most people don't need more information. They need help to make sense of what they already have." — Microsoft AI Blog

A waitlist is now open. The rollout will be phased, starting with an "early community" of users shaping the experience. Microsoft says Copilot already handles over 50 million consumer health questions daily across its products.

Healthcare is the vertical where AI can deliver the most tangible, immediate human value — and also the one where trust matters most. By framing Copilot Health as a preparation tool rather than a diagnostic engine, Microsoft avoids the regulatory minefield of practicing medicine while still inserting itself into the highest-value moment in the patient journey: the informed conversation with a doctor. The 50,000-hospital integration via HealthEx is the real moat here. If Microsoft can aggregate fragmented health records into a single coherent timeline, it solves a problem that has defeated the industry for decades.

3. Inside Sora's Collapse: $1 Million a Day, a Blindsided Disney, and OpenAI's Brutal Triage

The full picture of why OpenAI killed Sora last week is now clear, thanks to a TechCrunch analysis of a Wall Street Journal investigation. The numbers are brutal: Sora's global user count peaked at roughly one million before collapsing to fewer than 500,000. The app was burning through approximately $1 million every day in compute costs — not because demand was high, but because video generation is catastrophically expensive per query.

While an entire OpenAI team was dedicated to making Sora viable, Anthropic was quietly winning over the software engineers and enterprises that actually drive revenue. Claude Code, in particular, was eating OpenAI's lunch in the developer market.

Disney had committed $1 billion to a Sora partnership — and found out the product was being shut down less than an hour before the public announcement. The deal died instantly. — TechCrunch / WSJ

CEO Sam Altman made a cold calculation: kill Sora, free up compute, and refocus on the products that matter. The speed of the decision — and the fact that a billion-dollar partner was given less than sixty minutes' notice — reveals just how existential the competitive pressure has become.

Sora's demise is a case study in the economics of generative AI. Video generation consumes orders of magnitude more compute than text or code, and OpenAI's own data proved users wouldn't pay enough to justify the cost. But the real story is strategic: OpenAI chose revenue-generating products (ChatGPT, API, coding tools) over a moonshot that wowed at demos but couldn't sustain a business. The Disney situation is a cautionary tale for any enterprise building on top of an AI startup's experimental features. In this market, a product can go from "billion-dollar partnership" to "discontinued" in weeks. Build on APIs, not apps.

4. Anthropic's Mythos Model Is Privately Terrifying Government Officials

The fallout from Anthropic's data leak continues to intensify. According to Axios, Anthropic is privately warning top government officials that its unreleased "Mythos" model — also described as a new "Capybara" tier larger than Opus — makes large-scale cyberattacks "much more likely in 2026."

The model's agentic capabilities allow AI systems to autonomously penetrate corporate, government, and municipal systems with what sources describe as "wild sophistication and precision." An unpublished Anthropic blog post, obtained by Fortune, stated Mythos is "currently far ahead of any other AI model in cyber capabilities" and "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders."

"We're developing a general purpose model with meaningful advances in reasoning, coding, and cybersecurity. Given the strength of its capabilities, we're being deliberate about how we release it. We consider this model a step change and the most capable we've built to date." — Anthropic spokesperson to Fortune

Cybersecurity stocks took a hit on the news. Meanwhile, the "shadow AI" problem compounds the risk: a Dark Reading poll found that 48% of cybersecurity professionals report employees firing up Claude, Copilot, or other agentic models — often from home — and inadvertently connecting them to internal work systems, creating new attack surfaces.

The dual-edged nature of frontier AI has never been more visible. Anthropic's own safety-first reputation is now entangled with the reality that its most capable model is also its most dangerous. For enterprises, the immediate action item isn't about Mythos specifically — it's about shadow AI governance. If half your security team says employees are spinning up unsanctioned AI agents that connect to production systems, you have a policy problem that no model card can fix. Every organization needs an agentic AI usage policy yesterday, and CISOs should be auditing which AI tools have access to internal APIs, credentials, and data stores.

5. ChatGPT Ads Quietly Cross $100M in Annualized Revenue — in Six Weeks

While the Sora shutdown dominated headlines, OpenAI's ad business was sprinting in the opposite direction. The company's ChatGPT Ads trial has surpassed $100 million in annualized ad revenue in just six weeks, according to multiple reports. The speed of the ramp is remarkable — and it explains why Altman was willing to sacrifice Sora's moonshot in favor of doubling down on ChatGPT's core monetization engine.

The ad format integrates commercial recommendations into ChatGPT's conversational responses, and early advertiser feedback suggests unusually high engagement rates compared to traditional search advertising. The launch also had collateral effects: Anthropic's Super Bowl ads — which mocked ChatGPT for showing ads and promised Claude would never do the same — helped drive Claude's own subscriber surge.

$100M annualized in six weeks makes ChatGPT one of the fastest-scaling ad products in tech history. But it creates a strategic tension for OpenAI: the more successful ChatGPT Ads become, the more ammunition competitors like Anthropic have for their "we don't show ads" positioning. Claude's consumer subscriptions have more than doubled this year, partly because of that contrast. For marketers, the early signal is clear — ChatGPT Ads are performant and should be in your test budget now. For AI strategists, the deeper question is whether ad-supported AI assistants can maintain user trust long-term, or whether the market splits into "free with ads" and "premium, ad-free" tiers — much like streaming.

6. States Defy Trump and Plow Ahead with Their Own AI Laws

President Trump's White House released its National Policy Framework for AI on March 20, urging Congress to create a unified federal approach and explicitly warning states that "a patchwork of conflicting state laws would undermine American innovation." The states' response, as reported by the New York Times: they're plowing ahead anyway.

California, Colorado, Illinois, and a growing list of states have introduced or advanced AI-specific legislation covering everything from algorithmic bias and hiring discrimination to deepfake disclosures and companion chatbot regulations. The White House framework itself blends light-touch innovation goals with protections for children online and guidelines on political bias in models — but it remains nonbinding legislative recommendations, not law.

The White House framework "blends the Trump administration's effort to create a national AI rulebook on issues like political bias within models and reducing barriers to innovation with protections for children and teens online." — Politico

This is headed for a collision. The White House wants federal preemption; the states aren't waiting. For any company deploying AI across multiple states — which is virtually every company — the compliance landscape is about to become exponentially more complex. The practical takeaway: build your AI governance framework to the strictest standard (currently California's and Colorado's proposed rules) and you'll be positioned for whatever emerges at the federal level. Ignoring state-level developments because "the feds will preempt them" is a bet most general counsels can't afford to make.

7. Diamandis: "The Machine Is Building Itself" — Why Elon's TeraFab Changes Everything

Peter Diamandis published his latest Metatrends essay this week, connecting three recent developments into a single thesis about recursive AI improvement. At the center: Elon Musk's announcement of TeraFab, a vertically integrated chip fabrication facility in Austin designed to produce 1 terawatt of AI compute per year — roughly 50 times the current global output of approximately 20 gigawatts.

Diamandis argues the move follows Musk's established playbook: when suppliers can't move fast enough, build it yourself and iterate relentlessly. The kicker, in Diamandis' telling, is that the TeraFab's chip designs won't primarily be created by humans — they'll be designed by Grok's superintelligence, tested, iterated, and improved in a continuous loop.

"For the last decade, AI progress has been throttled by one thing: compute. Not ideas. Not talent. Not capital. Compute. Terafab doesn't just ease that constraint… it obliterates it. When you go from 20 gigawatts to 1 terawatt, you're not uncorking a bottle. You're removing the bottle entirely." — Peter Diamandis, Metatrends

Separately, the All-In podcast dedicated significant time to Anthropic's "generational run" and questioned whether OpenAI is in "focus mode or panic mode" — while Jason Calacanis made waves by predicting a 200x rally for Bittensor's TAO token, calling it the potential "Bitcoin of AI" and disclosing a personal investment of approximately $500 million in the decentralized AI network.

Diamandis' "machine building itself" thesis sounds hyperbolic until you look at the numbers. If TeraFab ships anywhere close to its targets, the compute bottleneck that has shaped every AI roadmap for the past five years ceases to exist. That doesn't just accelerate model training — it democratizes it. Smaller companies, universities, and governments that were priced out of the frontier suddenly have access. The downstream implications are enormous: more models, faster iteration, more agentic systems, and an even more urgent need for the governance frameworks we just discussed. The abundance Diamandis preaches isn't inherently good or bad — it's a multiplier. The question is what it multiplies.

Why It All Matters

Today's stories share a common thread: the AI industry is entering a phase of radical consolidation and divergence simultaneously. Microsoft is consolidating models into a single orchestration platform. OpenAI is consolidating around revenue-generating products by killing experiments. Anthropic is consolidating its position as the safety-conscious alternative — even as its most capable model raises the scariest cybersecurity questions in the industry. Meanwhile, AI governance is diverging: the federal government wants one rulebook, states are writing their own, and enterprises are caught in the middle.

For business leaders, the playbook is straightforward: adopt multi-model strategies, audit your shadow AI exposure, build to the strictest compliance standard, and treat compute abundance as both an accelerant and a risk multiplier. The companies that navigate this transition well will have invested in governance and orchestration — not just raw capability.

Need help navigating AI for your business?

Our team turns these developments into actionable strategy.

Contact SEN-X →