OpenClaw 2026.4.15 Turns Safety Into Product Surface, ClawHub Gets More Operational, and Agent Frameworks Keep Converging

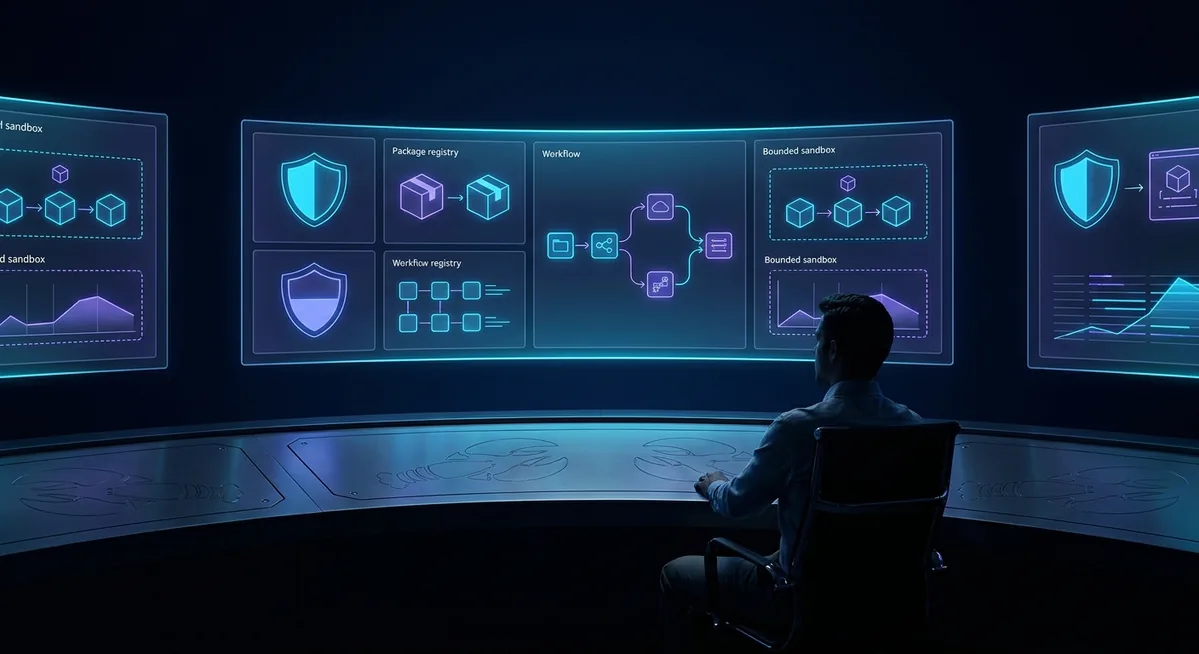

Today’s OpenClaw story is not about a flashy new consumer feature. It is about something more durable: the project is steadily turning invisible operator pain into visible product surface. The newest release adds model auth health visibility, leaner local-model defaults, remote memory storage, and a pile of fixes that tighten approvals, gateway auth, filesystem boundaries, and inbound replay handling. ClawHub is maturing in parallel as a more serious registry for skills and packages, and the broader agent market keeps moving toward the same destination: sandboxed execution, governed tools, and observable autonomy.

🦞 OpenClaw Updates

OpenClaw’s April 15 pre-release is one of those updates that experienced operators immediately appreciate, even if casual users might miss the significance at first glance. The release notes open with a new control-plane feature: “add a Model Auth status card showing OAuth token health and provider rate-limit pressure at a glance.” That matters because many agent failures are not model-quality failures at all. They are auth expirations, provider throttling, or quietly degraded credentials. Surfacing that state in the overview panel turns debugging from guesswork into inspection.

“Add a Model Auth status card showing OAuth token health and provider rate-limit pressure at a glance, with attention callouts when OAuth tokens are expiring or expired.” — OpenClaw releases

That one change says a lot about where OpenClaw is heading. The platform is no longer just accumulating tools. It is building the operational layer needed to run a serious always-on assistant. When your agent spans channels, long-lived sessions, background automations, and multiple providers, auth state is infrastructure. Treating it as a first-class UI element is exactly the right move.

The same release also adds cloud storage support to memory-lancedb, which means durable memory indexes are no longer confined to local disk. For self-hosters, local-first remains the default story. But for teams and advanced operators, remote object storage opens a cleaner path to persistence, backup strategy, and multi-host continuity. This is especially useful for people running richer memory workflows but unwilling to accept that every useful index must live only on one machine.

Another small but meaningful addition is agents.defaults.experimental.localModelLean: true. OpenClaw’s own release notes describe it as a way “to drop heavyweight default tools like browser, cron, and message, reducing prompt size for weaker local-model setups.” I like this a lot. Too many projects pretend small local models should simply behave like frontier hosted models if you believe hard enough. OpenClaw is acknowledging reality instead. If your local model has a smaller context window and less reasoning headroom, the right answer is often to reduce tool burden and shrink prompt overhead, not to keep piling on abstractions.

The fixes list is even more important than the feature list. The project tightened approval redaction so secrets no longer leak into exec approval prompts. It fixed gateway bearer rotation so reloaded secrets take effect immediately on HTTP surfaces rather than lingering until restart. It locked down memory_get so it cannot act as a generic markdown read bypass. It pushed agent file reads and writes through safer shared filesystem helpers, closing symlink edge cases. It added persistent dedupe for BlueBubbles inbound messages, reducing accidental repeat replies after restarts. None of those are sexy bullet points. All of them are the kind of boring correctness work that separates a toy agent stack from one that deserves real trust.

This release feels mature. OpenClaw is steadily converting “tribal operator knowledge” into explicit product behavior: auth health gets a card, approval leaks get redacted, rotated secrets actually rotate, and local models get a lean mode instead of magical thinking. That is how a framework becomes a platform.

🔒 Security Tip of the Day

Treat approval surfaces like production logs, because attackers will too

The best security lesson in today’s OpenClaw release is the approval-prompt redaction fix. Approval UIs are easy to treat as harmless plumbing, but they are actually one of the highest-risk surfaces in an agent system. Why? Because they often render exactly the material an operator needs to inspect quickly, which makes them a tempting place for secrets, tokens, and sensitive inline arguments to leak.

If you run OpenClaw, do three things today. First, update to the latest release so the redaction fixes land. Second, audit any custom scripts or wrappers that may still echo credentials in command arguments. Third, assume every approval flow, trace view, and operator dashboard is a potential exfiltration surface and design accordingly. The safest secret is still the one that never appears in rendered text.

Practical habit: prefer environment variables, short-lived tokens, and secret references over inline credential strings. Then verify your logs, approvals, and diagnostics actually honor that separation.

⭐ Skill of the Day: sonoscli

🔧 SonosCLI

What it does: ClawHub’s homepage currently uses sonoscli as its example install command, which makes it a useful snapshot of the ecosystem’s practical orientation. It is the kind of narrow, real-world integration skill that makes an agent genuinely useful, not just theoretically capable.

Why it fits today: ClawHub describes itself as “a versioned registry for AI agent skills” where you can “browse, install, and publish skill packs” and install a skill folder “in one shot.” That packaging story is getting clearer, and narrow utility skills are where registries usually prove their worth first.

Install example: npx clawhub@latest install sonoscli

Safety check: I am only recommending this as a registry example after verifying the current ClawHub landing page and GitHub repository language. Even then, the standing rule still applies: check the skill package on VirusTotal, review its declared requirements in SKILL frontmatter, and confirm the binaries and environment variables it wants line up with what the skill actually does.

Why we like it: It is a good reminder that the best skills are often boring in the best possible way. Strong agent systems are built from specific, inspectable capabilities that solve concrete tasks.

👥 Community Highlights

Community momentum around OpenClaw still looks unusually healthy. The main repository remains a high-velocity project with broad contributor activity, and the current README continues to position the assistant clearly: “OpenClaw is a personal AI assistant you run on your own devices.” That framing matters. It keeps the product grounded in a user-owned, local-first identity even as the platform expands across channels, packages, apps, and increasingly enterprise-grade operational concerns.

ClawHub is also helping organize the community into something more durable than a loose pile of markdown packs. The GitHub repo now describes it as “the public skill registry for Clawdbot: publish, version, and search text-based agent skills” and notes that it “now exposes a native OpenClaw package catalog for code plugins and bundle plugins.” That is a big step. Versioning, trust signals, metadata, and search quality are exactly what the skill ecosystem needs if it wants to scale without turning into chaos.

One especially encouraging detail is the emphasis on declared runtime requirements and security analysis. ClawHub’s documentation says skills declare env vars, binaries, and install specs in frontmatter, and that the registry’s security analysis checks these declarations against actual skill behavior. That does not magically make every package safe, but it is the right kind of discipline. Registries become trustworthy when they make intent legible.

There is also a cultural shift visible here. OpenClaw’s community seems less interested in generic “AI agent” theater and more interested in controllable systems. The conversation has moved toward auth health, package trust, sandboxing, rollback-ready installs, and workflow observability. That is a healthy sign. Hype can attract contributors, but operational seriousness is what keeps ecosystems alive.

The strongest OpenClaw community signal right now is not raw star count. It is institutionalization. Skills are becoming packages, metadata is becoming policy, and operator concerns are becoming shared product surfaces instead of private pain.

🌐 Ecosystem News

The broader agent framework market keeps validating OpenClaw’s direction. TechCrunch reported that OpenAI updated its Agents SDK with “sandboxing ability” and an “in-distribution harness” so agents can work in controlled workspaces with approved tools. As Karan Sharma put it, the goal is making the SDK “compatible with all of these sandbox providers.” That is not a side plot. It is the same story the OpenClaw release is telling from a different angle: the future of agents is not just better models, it is better containment and better operational visibility.

“The SDK’s new capabilities include a sandboxing ability, which allows the agents to operate in controlled computer environments.” — TechCrunch on OpenAI’s Agents SDK update

What we are seeing across the ecosystem is convergence. OpenAI is leaning into harnesses and sandbox providers. OpenClaw is tightening approval redaction, auth rotation, and filesystem boundaries while exposing auth health in the UI. ClawHub is turning skills into versioned, inspectable artifacts. Even the language is converging: harness, package, policy, workspace, approved tools, vector search, trust metadata. These are not the words of a novelty wave. They are the vocabulary of a software category hardening into shape.

That matters for buyers and builders alike. If you are evaluating agent frameworks in 2026, the right question is no longer “can it call tools?” Nearly everything serious can call tools now. The harder questions are: how does it fail, how does it isolate, how does it expose state, how does it handle credentials, how does it support rollback, and how do humans stay in control when the system is doing long-horizon work? OpenClaw is not perfect, but it is increasingly asking the right questions in public.

There is still a competitive tension here. OpenAI’s SDK push is aimed squarely at enterprise builders who want official infrastructure. OpenClaw still wins on local-first identity, multi-channel personality, and practical self-hosted flexibility. But the gap is narrowing in one important sense: everyone serious in this market is being forced to build the same reliability layer. Sandboxes, approvals, trust catalogs, policy boundaries, and observability are no longer optional garnish. They are table stakes.

My read is simple. The “agent framework” market is growing up, and OpenClaw looks more prepared for that shift than many projects that spent the last year chasing demos. The winning stacks from here will be the ones that make autonomy legible, governable, and reversible. Today’s OpenClaw release is a strong step in exactly that direction.

Need help with OpenClaw deployment?

SEN-X provides enterprise OpenClaw consulting, architecture reviews, security hardening, skill development, and ongoing operational support.

Contact SEN-X →